Welcome back to the Ethical Reckoner. In this Weekly Reckoning, we cover tech-related hostage-taking of varying degrees of metaphorical (from the governments of Nigeria and the UK), more direct action in the form of app bans from China, plus a preview of a Meta Oversight Board case about deepfake celebrity pornography. Then, we have a special guest for the Extra Reckoning: Devin Almonor from the A.I. Ethicist!

This edition of the WR is brought to you by… the return of indoor weather in Belgium 🌧️⛄

The Reckonnaisance

Nigeria continues to hold Binance executive

Nutshell: A compliance officer for Binance has been held in Nigeria for six weeks as Nigeria tries to crack down on money laundering.

More: Tigran Gambaryan and another Binance employee, Nadeem Anjarwalla, traveled to Nigeria for a two-day business trip in February, but were held for a month without charges. Anjarwalla escaped under mysterious circumstances, and Nigeria charged the two men and Binance itself with tax evasion and money laundering, despite the fact that the two employees didn’t have decision-making power.

Why you should care: The NY Times article frames this around the legal woes of crypto companies—Binance’s former CEO recently stepped down and pled guilty to money laundering in the US, and there are more investigations worldwide—but another interesting aspect is the use of corporate hostages, which is happening in more and more countries. So-called “hostage-taking laws” require companies to have local employees to give countries leverage. We don’t have to have sympathy for crypto execs to be worried about countries trying to strong-arm companies into compliance, because sometimes it’s about money laundering, but sometimes it’s about censorship.

From the NY Times: “Only a few weeks earlier, he and a group of colleagues had rushed out of Nigeria, concerned that the local authorities might detain them, five people familiar with that trip said. This time, he assured his wife, he would ‘get in and get out.’”

UK exploring child safety pledges from social media companies

Nutshell: The UK government is planning talks with Meta, X, Google, and Apple to create a voluntary charter to protect children on social media.

More: The UK is already exploring a ban on social media for kids under the age of 16, and this to-be-developed charter could also include an obligation to notify parents when kids are repeatedly looking up “disturbing content.” It would build on the controversial Online Safety Act, which places legal responsibility on companies to keep kids from accessing “harmful content.” Presumably, it would include some incentives for compliance, or at least public shaming if they don’t.

Why you should care: The UK is committed to “making the UK the safest place to be a child online” and going big on tech safety overall, passing the Online Safety Act and hosting AI safety summits. These pledges seem similar to the US’s voluntary AI safety commitments, except it would actually make companies do things they aren’t already doing/don’t want to do. This sounds sort of appealing, but some of its efforts raise concerns about privacy and paternalism, and if the pledges shape up like they’re rumored to be, this will only add to the chorus of questions.

Meta Oversight Board takes on celebrity deepfake pornography

Nutshell: Meta’s “Supreme Court” is taking on two cases of nonconsensual deepfake pornography of female celebrities to assess how Meta’s policies address explicit AI-generated images.

More: One case is about an American celebrity where the poster appealed after it got taken down. The other is about an Indian celebrity where the image didn’t get taken down after being appealed and the person who reported it appealed the decision to leave it up (it was removed after the Oversight Board took on the case). The Board wants to assess whether Meta’s policies are adequate or if they need to be changed.

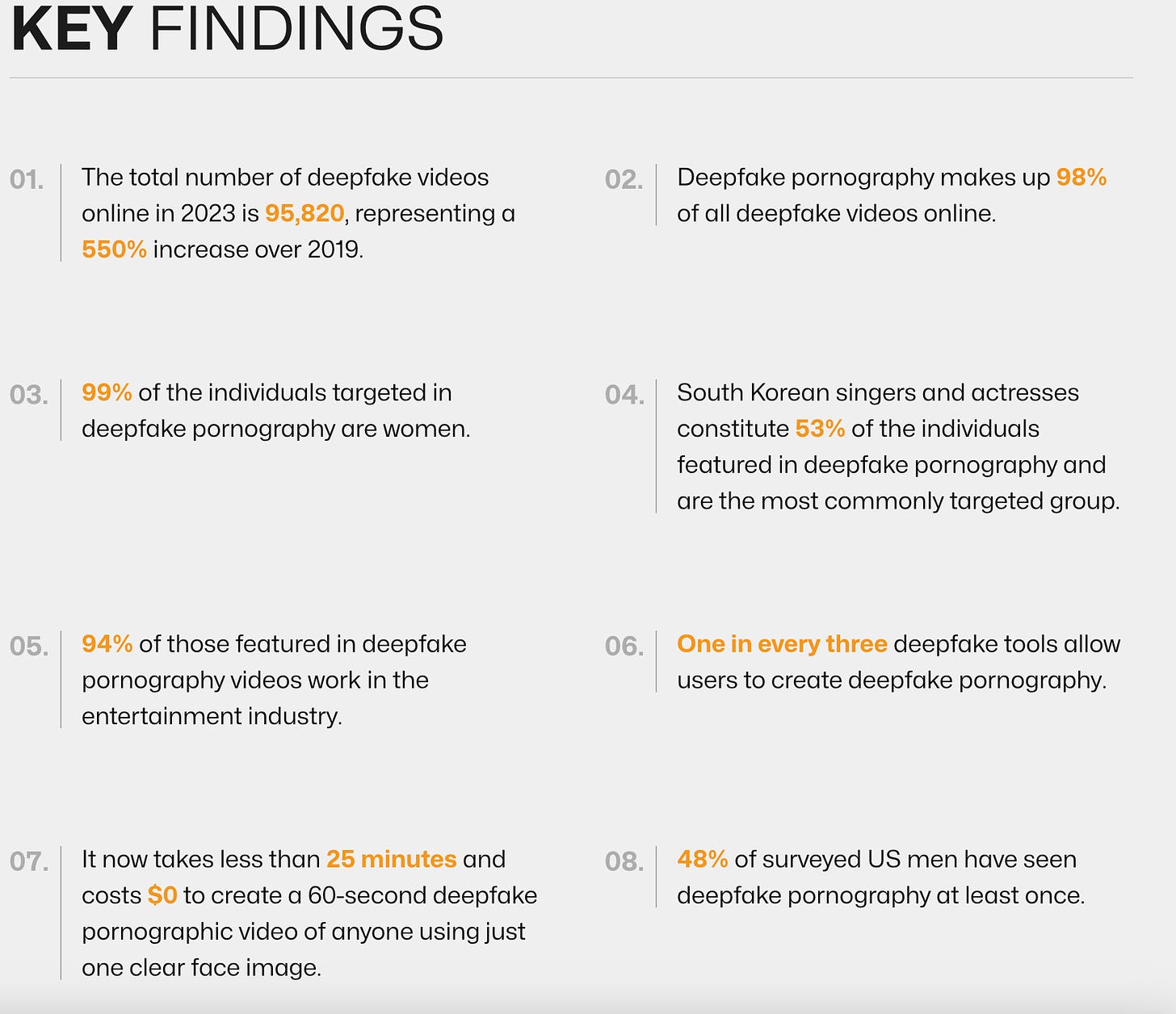

Why you should care: Nonconsensual deepfake pornography is a huge problem. 98% of deepfake videos are pornographic, and 99% of that is of women, often targeted for harassment and humiliation. These cases, if the Board rules that Meta has to take down nonconsensual explicit AI-generated images, could be a big step towards combatting it, especially since its existing “manipulated media policy” and rule on “derogatory sexualized photoshop or drawings” aren’t doing enough to combat it. And, while a sample size of two is hard to extrapolate from, there are hints of international disparities in content moderation, which is a problem in content moderation more broadly.

China bans more messaging apps

Nutshell: Apple was made to remove WhatsApp, Signal, Telegram, and Threads from the Chinese App Store under “national security concerns.”

More: WhatsApp was already banned in China but could be downloaded from the App Store and then accessed with a VPN, but now new downloads are impossible. Apple emphasized that they have to follow local laws, but the convenient timing of this enforcement (typical of Chinese tech regulation) may be a response to the TikTok divestment bill that just passed the US House of Representatives.

Why you should care: These app bans won’t impact you if you aren’t in China, but it’s another concerning crackdown on freedom of expression. It’s also an escalation of what may turn into a tit-for-tat tech battle between the US and China, but what the next targets would be—Temu? Shein? Device sales?—isn’t clear.

Extra Reckoning

Today, we have a guest author for the Extra Reckoning! Devin Almonor is a Machine Learning Engineer and the writer at the A.I. Ethicist, where his motto is that “the soul of artificial intelligence is its responsible and ethical implementation.” I’m super excited to have him writing about the nuts and bolts of how Meta’s algorithms decide on what content to show you in your Instagram/Facebook/Threads feeds.

When you open a social media app, the content there isn’t randomly plucked out of a bag, but algorithmically picked for you. Researchers at Meta have studied machine learning algorithms to enhance users’ engagement with the platform through content curation. Content providers use real-time bidding (RTB) to present content to users’ feeds based on their interests; typically, the content provider who bids the highest (either with money, in the case of ads, or an engagement metric, in the case of content) wins a slot to present to users’ feeds. There are two approaches to bidding: the hand-tuned approach and Bidding And Ranking Together (BART) approach. The hand-tuned approach uses expected Click-Through Rate (eCTR) (e.g., the likelihood of a user joining a Facebook group) and expected Post-Click Conversion Rate (eCVR) (e.g., the likelihood of the user engaging with a Facebook group), multiplied by a probability function hand-tuned to reach the highest user engagement metric. The BART approach uses advanced machine learning models to optimize the bid price and rank of items in the recommendation system.

Here’s an analogy: the hand-tuned approach is picking books to sell at your bookstore that have had success in being sold (eCTR), and the books that are actually read after they’re bought (eCVR). Once you have this information, you would need to determine the types of genres, authors, and times that customers typically buy the best-selling books. BART automates the process; it learns all the complex relationships between customers, books, genres, authors, and times and returns the best strategy to market your books. Using BART, the Meta research showed that Facebook Home Feed engagement increased by 14.7%, Friend Request acceptances increased by 7.0%, and the number of active users increased by 1 million. Meta researchers have concluded that this BART approach creates a better, more comprehensive user experience.

Meta’s research focuses on optimizing bid and ranking systems, which it also uses in ad targeting. It has recently made efforts in shifting towards more ethical and inclusive ad targeting by eliminating certain targeting filters. Prior to the advertisements shift, there were concerns about the possible misuse of the targeted ads; advertisers could have discriminatorily targeted users based on race, disability, or even income. The BART approach has permitted Meta’s ad service to expand to more audiences, and so Meta has provided best practices for ad use. It appears that Meta is trying to become more responsible in its role to influence public opinion, and efforts to create a more ethical, responsible ads system are a step in the right direction because Meta has been under fire in part due to its disbanding of its Responsible AI Team. We are in the world’s biggest election year ever, and in the USA, disinformation—including in ads—is a major concern. Meta has a responsibility to ensure inappropriate and manipulative ads do not resurface to ensure honest elections and promote civility. We’ll have to be vigilant as we see whether Meta will back its statements with actions.

I Reckon…

that if you’re curious about tech philosophy, the LuFlotBot is a great resource.

Thumbnail image generated by DALL-E 3 via ChatGPT with the prompt “Make me a hazy abstract Impressionist picture representing the concept of being a hostage.”