Welcome back to the Ethical Reckoner, and happy July! In this Weekly Reckoning, we cover the Surgeon General’s call for a social media warning label (admittedly old news but important), and then a grab bag of new tech: generative AI (in the form of a lawsuit from the recording industry), lab-grown meat (in Singapore), and crypto (in the form of a hamster-based app swiping Iran). Then, I investigate what’s going on with Meta’s AI labeling and why photographers are complaining that it’s labeling their hand-developed photos as AI-generated.

This edition of the WR is brought to you by… pride (it’s still June as I write this)

The Reckonnaisance

Surgeon General calls for social media warning label

Nutshell: Dr. Vivek Murthy wrote an op-ed arguing that social media should have a warning label just like cigarettes.

More: Murthy calls on Congress to authorize the warning label (though what exactly is the point of being the Surgeon General if you can’t do this on your own?) and to pass additional legislation to require social media companies to share data on health effects. He also calls on schools to go phone-free (which California governor Gavin Newsom is working on), parents to keep their kids off social media until after middle school, and teens to support each other. How the label would be “applied” and what counts as social media is unclear.

Why you should care: Ok, this happened last week, but after discussing child’s online safety in ER 22, I couldn’t not mention it. This whole-of-society rallying cry is a direct effect of the recent debate over kids and social media. But the evidence all around is shaky, and this proposal is unsurprisingly dividing people as well. The urge everyone has is to do something, but the question should be if this is the most effective way to help. When we put warning labels on cigarettes saying they cause cancer, there was robust scientific evidence to support it. That’s not the case here, and Hard Fork raised the concern that a warning label might mean that parents of kids who could benefit from using social media might not let them use it. Regardless, it’s a good way to continue the discussion, and hopefully to rally some support around the kinds of experiments with phone-free schools and other initiatives that might help get some of the evidence that would help.1

Singapore on the cutting edge of lab-grown meat

Nutshell: Despite funding and regulatory issues elsewhere, Singapore is investing heavily and approving cultured meat products rapidly.

More: Singapore is trying to produce more of its food domestically, including alternative proteins like cultivated meat (growing animal proteins like beef and chicken in a lab from cell samples). The price of cultivated meat is dropping and may reach parity with conventional meat by 2030, but investment in the US and EU is flagging, and two US states and Italy have banned it. The Singaporean government is investing a ton into the technology, though, and several other countries in Asia and the Middle East are starting to put more resources into it as well.

Why you should care: Here are some facts about meat:

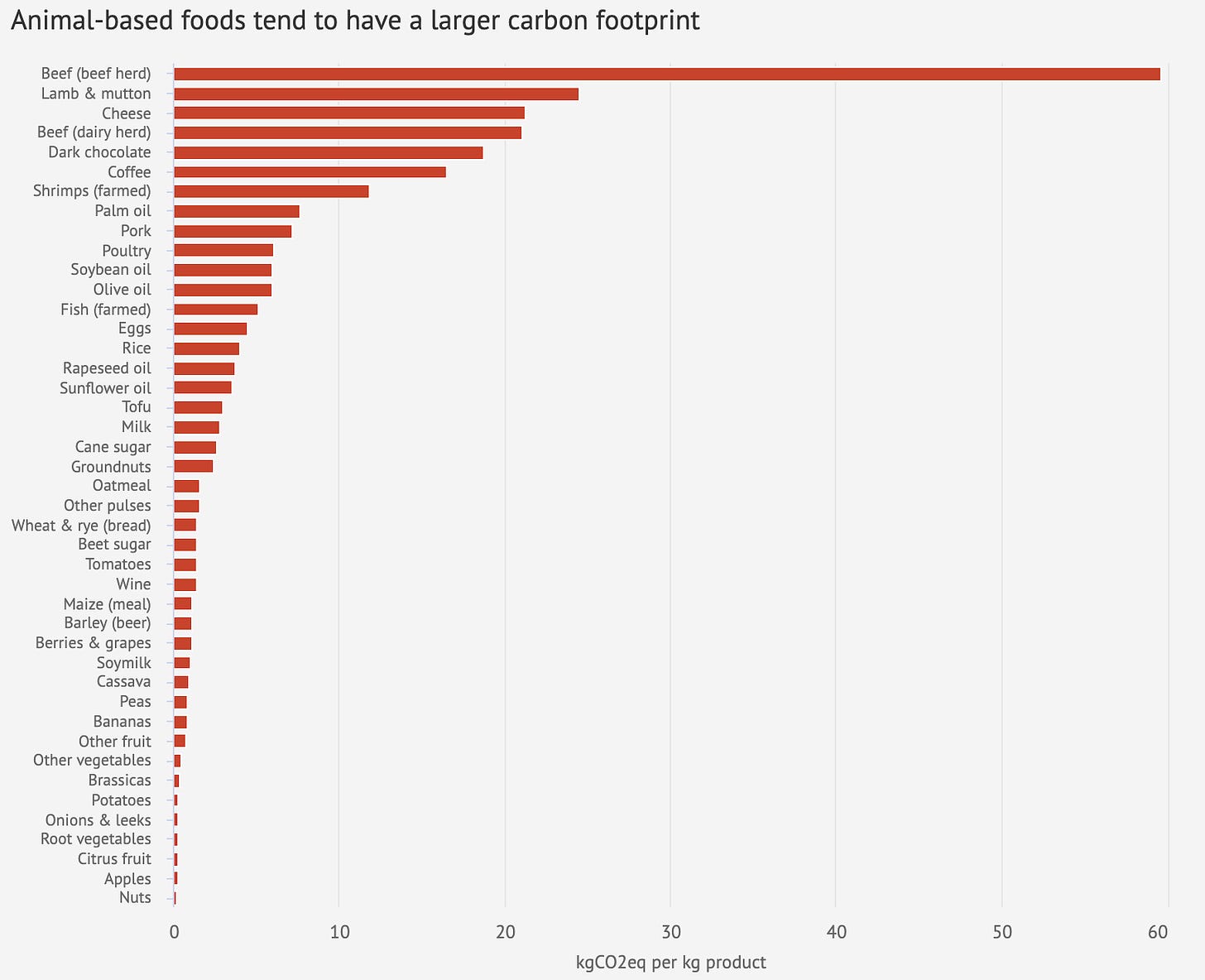

Livestock are responsible for 14.5% of global greenhouse gas emissions.

92 billion animals are killed for food each year (including 200 million chickens a day).

But people like eating meat, and so finding alternative ways to fulfill that craving is going to be important in how we address climate change, since switching to a plant-based diet can reduce emissions by almost half and food land usage by 3/4. Cultured meat could be one way to do this, but Silicon Valley has turned away from it in recent years (probably towards AI), and well-funded lobbyists are waging “label wars” to try and make it so plant-based alternatives can’t be called “milk” or “burgers.” Amidst these frustrations, it’s heartening to know that some areas are pursuing it more actively, and hopefully these innovations will trickle back to the US (where we eat three times the global average of meat).

The recording industry is coming for generative AI

Nutshell: The Recording Industry Association of America is suing music-generating AI companies, alleging copyright infringement.

More: The lawsuit alleges that the tools have “indiscriminately pillaged more or less the entire history of recorded music” to train their models, which then end up reproducing existing songs almost exactly. The companies admit that they scraped all the music they used without making deals with record labels, claiming “fair use,” but fair use doesn’t apply when you’re basically reproducing content wholesale. And the examples are persuasive—think a slightly distorted, but fully recognizable, Dancing Queen; you can hear some in Hard Fork here. A great quote from the RIAA CEO in that podcast:

“They took chickens, made chicken salad, and then said they didn’t have to pay for the chickens.”

Why you should care: This is interesting because it’s almost the same argument that authors and news publishers are making in their lawsuits against text-generating AI—that tools like ChatGPT can spit out copyrighted and paywalled articles almost verbatim—but seems like a more persuasive example because, while the text generators can claim they only grabbed publicly available snippets, it’s very obvious that the companies took entire copies of songs. But this could then set a precedent for the text-generating AI companies, too—TechCrunch calls it “the bloodbath AI needs.”

Crypto game craze spreads to Iran

Nutshell: Amidst rapid inflation, Iranians are turning to sketchy apps that hint at getting cryptocurrency for playing.

More: The Hamster Kombat “game” (I use that term generously because the only game mechanic is tapping) gives users in-game coins and sort of looks like an app that handed out free crypto, so millions of people are signing up in the hope that it will pay off. Hamster Kombat hit 200 million users in less than three months and claims to be the first YouTube channel to hit 10 million subscribers in under a week.

Why you should care: Something similar happened in the Philippines with the game Axie Infinity and other crypto earning games, which created an exploitative, Ponzi-like structure that eventually came crashing down. When economic conditions get desperate, people are more inclined to turn to what seem like magical technical solutions, which leaves them vulnerable to exploitation and scams, but in this case, there’s not even a likely payoff—the only ones who seem to making money are the app developers.

Extra Reckoning

Something funny is going on with Meta’s AI detection, and photographers are mad. As a reminder, Meta announced in February that they were going to going to label AI-generated content from partner companies’ tools based on metadata packaged with the image. What it sounded like from the announcement was that other companies, like OpenAI and Microsoft, would embed “invisible markers” into images generated by tools like DALL-E (what I use to make this newsletter’s thumbnails). Meta would then scan for them when photos are uploaded and, if they found them, apply a “Made with AI” label. They explicitly noted that they are “working hard to develop classifiers” to automatically detect AI-generated content without those invisible indicators. The whole post only discusses “AI-generated” and “content created using AI,” so people were left with the impression that Meta would be labelling pictures generated wholesale by AI. Which is good!

But that’s not what’s happening. Photographers are complaining that when they upload their non-AI-generated photos to Instagram, it labels them as “Made with AI.” This includes my cousin, Max Tardio, a talented photographer (not just family bias speaking). He shoots on film and develops his photos in a darkroom, then uploads them to Instagram—where they’ve been getting labeled as “Made with AI.”2

Max’s process is literally the antithesis of AI generation. There’s no prompt; there’s just his camera. No algorithms, just darkroom chemicals. And he’s not alone. Lots of photographers are complaining that their pictures are getting the same flag, including former White House photographer Pete Souza. One possible culprit is that apps like Lightroom and Photoshop are now using AI for even very simple editing tools, which gets put in the metadata and then flagged as AI-generated. I’m not an expert on how photo-editing algorithms have changed over time, but it seems that simple edits that photographers were doing before AI shouldn’t get a photo labeled as “Made with AI,” which implies that something was wholesale created by AI. Since before generative AI, I’ve been in favor of labeling when photos are significantly edited, especially in advertisements. But undoing red-eye or removing a speck from an image isn’t creating something wholesale or making a model look impossibly thin, and applying a Made with AI label that is explicitly targeted at AI-generated images is unfair and offensive to the very human photographers.

It seems like the label is somewhat dependent on which tool you use—for example, in Photoshop, Spot Healing and Content-Aware Fill don’t trigger the AI label, while using Generative Fill does, even if used for spot removal. This seems to be what befell Max, who loads his scans into Photoshop and removes the occasional speck of dust (because again: hand-developed!). But photographers aren’t informed of which tools will trigger the label, so it’s a massive guessing game.

It seems like we need to have a broader conversation about what AI manipulation is and what kind of manipulation we care about. If a single Photoshop spot removal counts as “AI generation,” then why don’t they label smartphone pictures that people have done the same for? Or, to be honest, smartphone pictures in general? Because when you take a picture, the image you end up with isn’t just based on the optics. Phones apply loads of AI-based processing. You can see this when you use Portrait Mode—AI algorithms are what detect the edges and where to blur. This was dramatically illustrated when YouTuber Marques Brownlee used a Samsung phone to take an astoundingly crisp picture of the moon, but that wasn’t what the camera saw. It’s what the algorithms knows the moon looks like and thinks you want the moon to look like, and so it’s what the camera app make the moon look like. But no one is calling for smartphone pictures to be labeled as “Made with AI.”

Meta acknowledges that their AI labels are still a work in progress, and hopefully they’ll continue refining and improving their detection measures. But we need more than just better technical measures—we need to rethink what it means to use AI to manipulate an image and how we want to disclose it. These are my proposed standards:

A photo generated wholesale by AI should be labeled as “Made with AI.”

A photo where AI has created something new (including background fill after deleting an object) should be labeled as “Altered with AI.”

If there are AI-based editing tools that we decide merit disclosure, they can be labeled as “Edited with AI.”

The last two may be difficult to distinguish between, but photographers should be informed in their photo editing software which tools will trigger which labels, because it may come down to the tools rather than the actual use. Lastly, social media and editing software companies should be in conversation with photographers over this (Meta says they are aware of feedback and are exploring changes). Because, as my cousin put it, sometimes, “it’s literally a piece of paper.”

Just like this newsletter (thumbnail excepting), this was not Made with AI.

Update, 1/7/24 14:42 Eastern: Meta announced hours after this issue went out that they are updating Made With AI labels to “AI info” to provide more context for viewers. We still need more transparency for artists, but this is a start.

I Reckon…

that when an AI tool accused of plagiarism plagiarizes an article explaining its plagiarism, we’ve reached the seventh circle of irony.

In the meantime, the EU is going after tech companies head-on with charges against Apple and Microsoft under their new Digital Markets Act, which aren’t directly related to child protection, but shows the way the winds are blowing there.

Amidst the other flaws we’ll discuss, the labels are only visible on mobile.

Thumbnail generated by DALL-E 3 via ChatGPT with the prompt “Please generate an abstract brushy painting representing the concept of darkroom development.”