Welcome back to the Ethical Reckoner. In this Weekly Reckoning, we cover how the US Supreme Court threw social media companies a (necessary) lifeline, how protestors in Kenya are using ChatGPT to spread information, OpenAI and Microsoft’s different approaches in China, and Apple pulling VPNs from Russia. Then, I narrate a brief history of AI.

This edition of the WR is brought to you by… the first watermelon of the season

The Reckonnaisance

US Supreme Court saves social media content moderation… for now

Nutshell: The Supreme Court sent cases from Texas and Florida restricting how social media companies can moderate content back to lower courts for review.

More: The opinions didn’t formally rule on the merits of the laws, but ordered the lower courts to re-assess the laws and their First Amendment implications. However, the court seemed skeptical of the laws, especially the Texas one, which could be foreshadowing if the cases return to the Supreme Court. In the meantime, the laws are on pause.

Why you should care: Content moderation is what keeps social media from being a cesspool—because unfortunately, when left to our own devices, we aren’t exactly paragons of online virtue (see: 4chan). It’s especially important for marginalized groups to keep them safe online, but these bills put platforms’ ability to do that in jeopardy in the name of “free speech.”

OpenAI leaves China while Microsoft doubles down

Nutshell: OpenAI is cutting off access to its API1 in China, contrasting with Microsoft’s Bing, which works in China with comprehensive censorship.

More: This is perhaps an unfair comparison, because they are separate companies. But Microsoft has a massive investment in OpenAI, and so this contrast in China strategies is really interesting. OpenAI is cutting off all of their services in China, but Microsoft keeps Bing available in China because they say that pulling out would cut off an “important avenue of communication and expression” in China. But a comparison of search engine censorship practices finds that Bing censors more aggressively than even the Chinese search engines, raising questions of how much that’s actually true.

Why you should care: There’s been a lot of drama around OpenAI recently, and this could be a potential source of further drama if these two very intertwined companies are taking completely different approaches to China. It’s also further splintering the generative AI ecosystem—Chinese LLMs are overtaking US ones in some key metrics, and keeping ChatGPT out of China is likely to just amplify the competitive dynamic.

Protestors in Kenya used generative AI to aid finance bill protests

Nutshell: Protestors used generative AI to spread information about proposed tax increases, eventually getting them scuttled.

More: Several GPTs (basically specialized versions of ChatGPT) did the rounds in Kenya. One let you ask about politician corruption cases, while another explained the controversial bill. Both GPTs have more than 10,000 conversations and show how Kenya’s younger generations are using the Internet in really innovative ways for protest—they also used TikTok and X livestreams, crowdfunding platforms, and walkie-talkie apps. In the end, the government withdrew the bill.

Why you should care: It’s fascinating to hear about how a new generation is using emerging tech for protests, and in this case, it was successful. However, I do wonder how accurate they are—I asked it a few things about taxes on eggs and it gave me a few different answers about what the tax is, but I couldn’t find the bill text to verify. Sill, in the whole “what is generative AI good for” debate, this is one front that should be explored.

Apple pulls 25 VPN apps from Russian app store

Nutshell: After a request from Russian authorities, Apple removed 25 VPN apps from the App Store.

More: This is part of a Russian crackdown on VPNs amidst their wartime censorship campaign. VPNs make it seem like you’re browsing from somewhere else, so they’re crucial tools to access information when a state is censoring the Internet.

Why you should care: This probably doesn’t affect you (although if you’re a Russia-based reader, I take that back). But it’s part of a trend towards authoritarian governments making the Internet less free, and that’s always worth paying attention to.

Extra Reckoning

AI has obviously been making a lot of headlines recently, but it has a 70-year history that gets lost in a lot of discussions, especially when generative AI (a relatively recent development) gets equated to the field as a whole. It can be a lot to try and wrap your head around—even for me as a computer scientist—and so when my grandma asked if I knew of any good short histories of AI, I promised I’d write one. Here, I’ll present a brief (and certainly biased) history of AI, divided into “summers” and “winters”.

The First Summer (1950s-1970s)

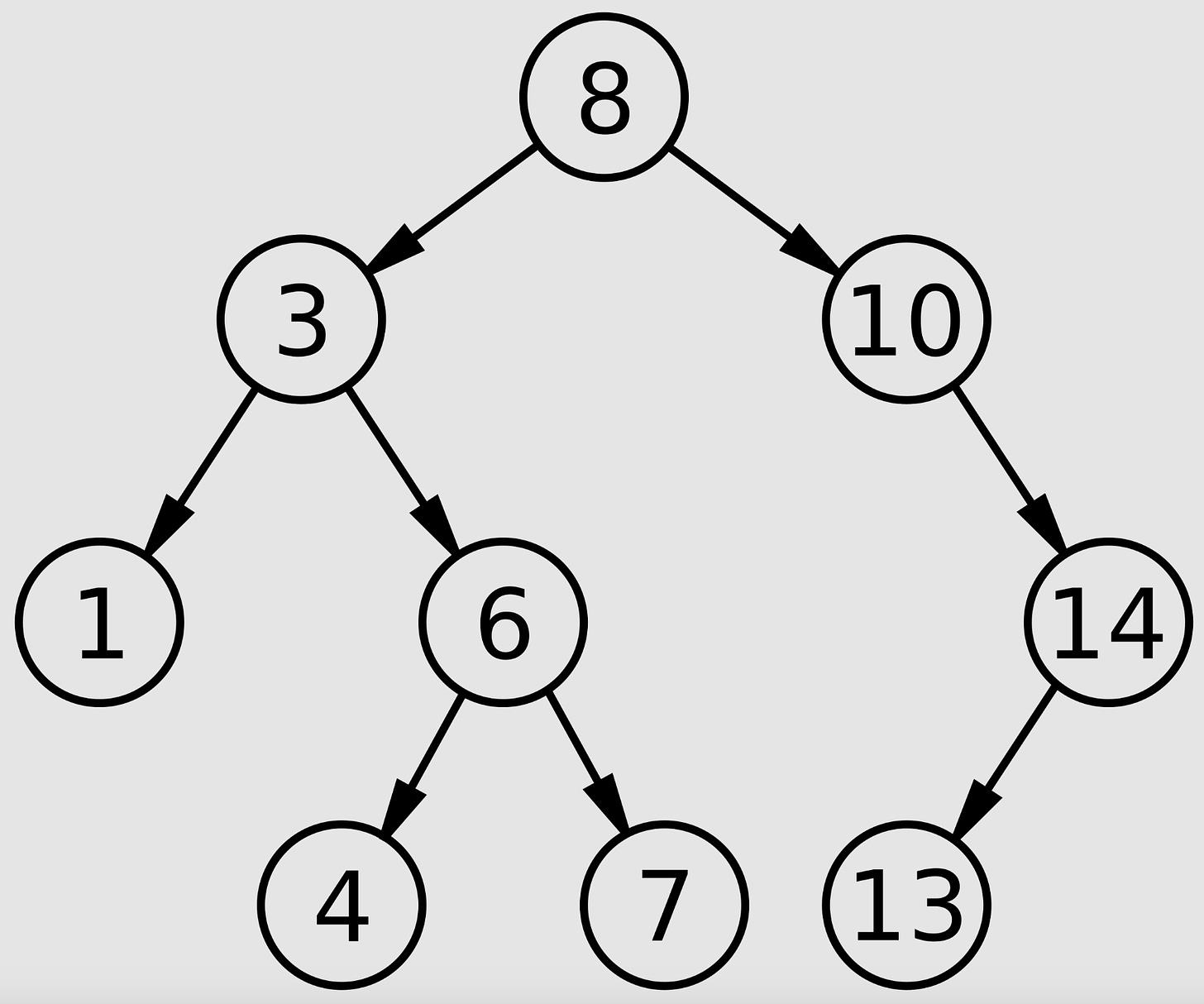

We’re skipping the prehistory of AI, which could really go back to myths thousands of years old about intelligent automata. More relevant would be Alan Turing’s musings about thinking machines (and the now-famous Turing Test) in the 1940s. But if you had to put a start date on AI as an academic discipline, it would be in 1956, when the Dartmouth Summer Research Project on Artificial Intelligence launched the field. AI in subsequent years primarily used search-based algorithms: essentially modeling a problem (like a checkers game) as a tree of possibilities and then algorithmically walking through it to find a solution (like the best next move), backtracking when necessary. These early approaches are called “symbolic AI” because they’re based on human-understandable representations of problems.

This approach proved excellent for relatively bounded problems like checkers, but computational limitations meant it couldn’t scale well. Eventually, funding dried up, and AI entered its first “winter.”

Prediction: “machines will be capable, within twenty years, of doing any work a man can do” (H.A. Simon, 1965) ❌

The Second Summer (1980s)

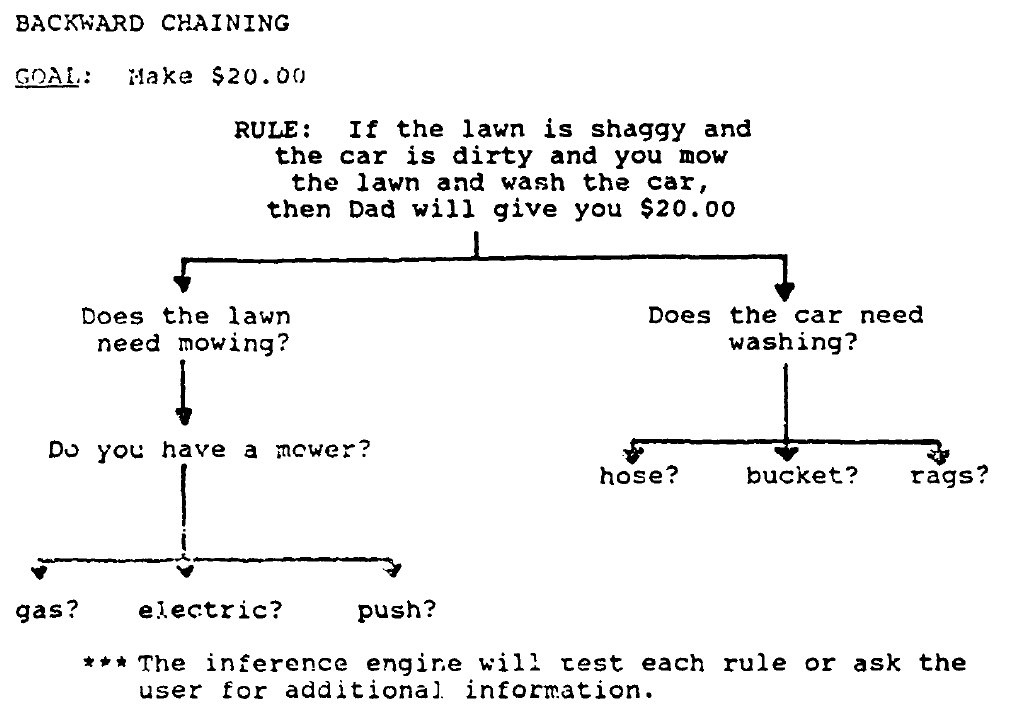

In the 1980s, AI found a new, useful application: expert systems. These answered questions about a specific, bounded domain using logic-based approaches.

Japan kicked off a new funding boom, and development ran hot and fast for a few years, but like any bubble, it eventually popped. AI companies went bust, and fewer and fewer companies found expert systems useful. AI entered its second winter.

Predictions: AI will be able to carry on a casual conversation by 1992 (Japan 5th Generation Project) ❌

The Invisible Spring (1990s-2000s)

By the 1990s, AI was a bit of a dirty work in business, but research continued, and the field made slow but steady progress by incorporating more complex probabilistic reasoning. AI became embedded into everyday products, like the Google Search algorithm and bank fraud detection, and the definition of AI became blurry. Deep Blue beat Garry Kasparov in 1997, showing that hardware and algorithmic advances were serving their purpose. This laid the groundwork for what was to come: the era of Big Data.

The Third Summer (2010s-today)

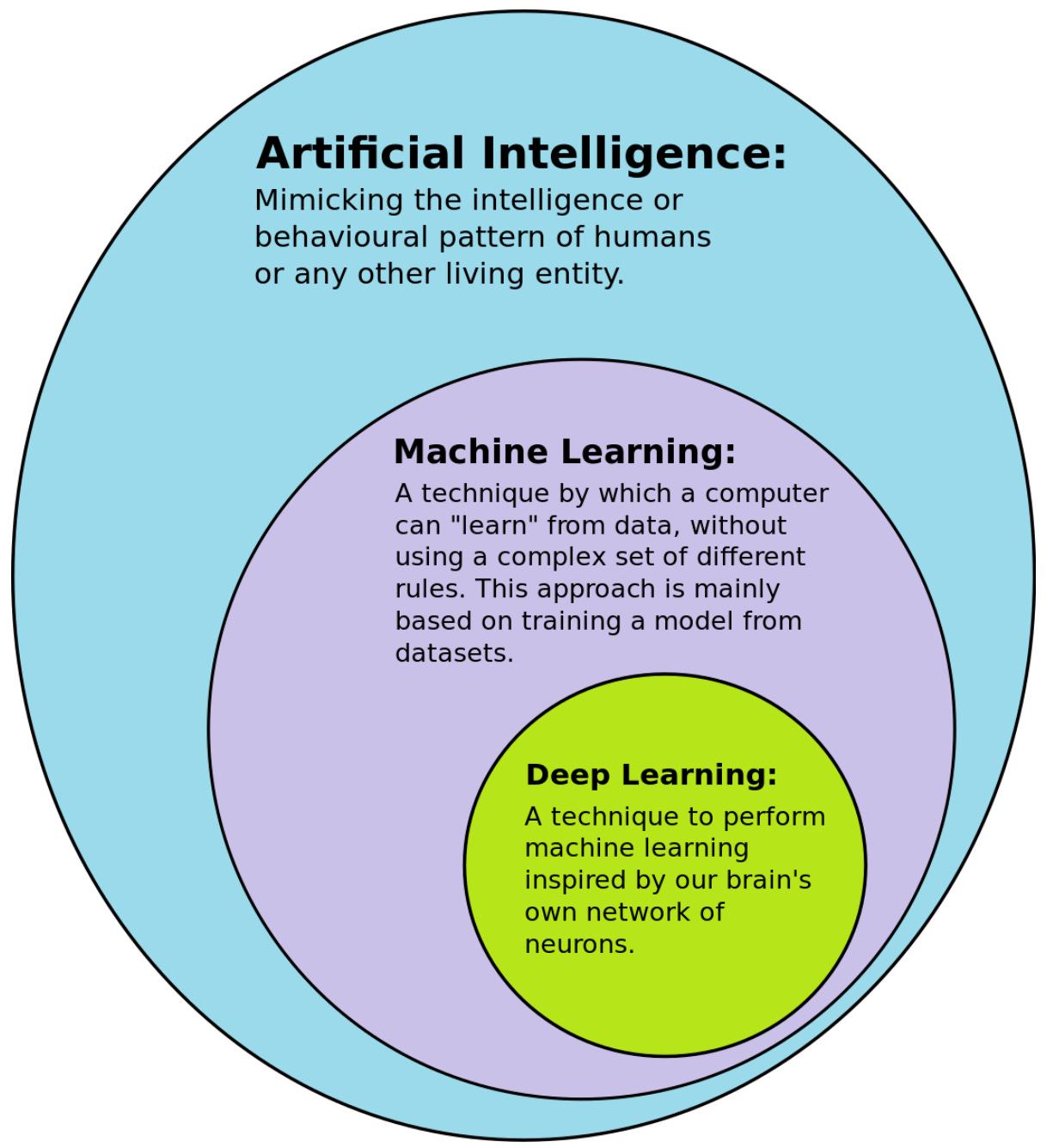

In the new millennium, the increased ubiquity of computers and the Internet meant that there was a whole lot of data floating around, and machine learning’s time had come. Instead of logic-based rules, machine learning (ML) uses data to teach a model something: how to recognize a cat, how to transcribe handwriting, etc.

This can do things that traditional logic-based approaches can’t. The difference between the two is like the difference between cooking by exactly following recipes versus through trial and error. If you follow detailed recipes step-by-step, you’ll get an end result, but you may not learn how to cook, and the next time you cook, you’ll follow another recipe exactly.2 If you start learning to cook through trial and error, it’ll take a while to end up with the dish you want, but you’ll have learned patterns and developed an intuition for what goes together through experience. Logic-based systems are like following a recipe: they go step-by-step according to the rules and don’t really learn anything from the experience. ML algorithms do learn from the data you feed it. But this also illustrates another crucial difference between these types of systems: explainability. If you ask the recipe-following cook why they turned the heat up on a pan, they’d be able to point to the step in the recipe where it says “turn heat up to high.” With a logic-based system, you can always trace the decision through the logical steps. But if you ask the intuitive cook why they did that, they may not be able to explain it: it just felt like the right thing to do. If pressed, they may be able to provide some general answers (it wasn’t browning fast enough, I wanted it crispier, the pan was heavier than expected) but you can’t trace the whole process that led to the decision. So it is with ML—the process is so complex that it’s difficult, if not impossible, to provide an explanation. Hence, these systems are often called “black boxes.”

During this era, you also have the birth of AI ethics, which is… one of the major reasons why we’re here on this Substack. It explores the ethical implications of AI and its possible impacts, positive and negative, on our society. You also see an intensification of AI safety concerns. For an explanation of the faction wars between them, check out ER 19:

The Generative AI Heat Wave (2020-today)

Generative AI is a subset of ML. Instead of classifying existing content, it generates new content. In the case of text, it’s trained on reams and reams of existing text and learns to predict what’s likely to come next. So when you ask ChatGPT a question, the large language model (LLM) that powers it is generating its response based on what the most likely next bit of text is. Just like AI in the earlier summers, AI is the buzziest of buzzwords, companies are leaping to boast about their AI integrations, and start-ups and predictions are springing up left and right. But there are also doubts: how useful is generative AI exactly? We’re already seeing signs that the bubble may be reaching its zenith: the lack of profitable business models, slow paid adoption, increasing industry turmoil, etc.

Even though AI is in a very hot summer, we’ve seen this film before, and before we get too caught up in the hype, it’s important to keep in mind that the history of AI has been very much cyclical. Sure, it’s been on a trajectory of increasing uptake and usefulness, but it hasn’t been a steady climb. What goes up must come down: we just throw the ball a little higher each time.

The Next Winter: ???-???

I Reckon…

that it’s astounding that the country that can convert a train line to a subway in four hours has only just eradicated the floppy disk.

An API (application programming interface) is basically a way for two pieces of software to talk to each other—it’s the computer version of a GUI (graphical user interface, think the visual way you interact with any program). If you’re writing an app that uses ChatGPT, you’d use the API. If you’re using it directly, you’d use the website GUI.

One hopes you’d pick up a few things that can extrapolate to cooking as a whole, but logic-based systems don’t.

Thumbnail generated by DALL-E 3 via ChatGPT with variations on the prompt “Make me an abstract Impressionist, brushy oil painting representing the concept of AI history”.

Thanks for newsletter.

Though I tend to support regulation over social media such as European model, I don’t think laws of Texas and Florida are good laws. It seems that Supreme Court will not rule in favor of regulation of content moderation, but it might support some regulation for transparency.