Welcome back to the Ethical Reckoner. This Weekly Reckoning is all about systems: algorithmic, human-in-the-loop, human-out-of-the-loop, centralized, and decentralized, in contexts from grocery shopping to Wikipedia to war. We’ll look at stories including the automated targeting system being used in Gaza, Amazon’s “Just Walk Out” system that relies on human labor in India, and then some musings about how Reddit and Wikipedia work, and how it could help (again) fix peer review.

This edition of the WR is brought to you by… spring (finally) springing in Belgium

The Reckonnaisance

Israel’s army using AI to identify targets in Gaza

Nutshell: The Israeli army is relying on an AI system, “Lavender,” to identify targets for bombing, raising concerns about human oversight and proportionality.

More: Officers operating the system reported taking just 20 seconds to confirm Lavender’s decision, calling themselves a “rubber stamp” for the automated decision. The system is known to have a 10% error rate, meaning that 10% of the targets it identified weren’t actually Hamas operatives.1 Some of this error may have come from the fact that the training dataset included civil defense workers, meaning it would learn to recognize noncombatants as targets, too.

Why you should care: Ethicists and humanitarian organizations, including the UN, are united in arguing that morally, humans need to retain control over life-and-death situations, and the UN is trying to outlaw lethal autonomous weapons (LAWs). Twenty seconds to check solely whether the target was a man is not meaningful human oversight. And combined with a military structure permissive of civilian casualties, this system is contributing to the enormous loss of live in Gaza. Regardless of your thoughts on the war, almost everyone agrees that LAWs are immoral, and this is inching toe-by-toe over a very dangerous threshold.

X’s Grok AI pushing fake news

Nutshell: After a fake news headline about Iran bombing Tel Aviv started to spread on X (née Twitter), X’s AI, Grok, further amplified it on the platform’s news tab.

More: X is trying to roll out “Grok AI” more broadly, including delegating news curation in the new Explore tab. As a result, users saw an AI-generated headline and summary of the fake story on top of the Tweets that had started spreading the misinformation, all in a place of prominence.

Why you should care: We know that algorithms can amplify misinformation, but this goes beyond that, with Grok leveraging platform infrastructure to put it in a place of prominence. It seems that human users created the original misinformation, reminding us that we don’t necessarily need AI and large language models to generate harmful misinformation, but it does raise the possibility of an automated content loop where LLMs generate misinformation that gets engagement and then is amplified even further. X, which has no meaningful trust and safety team to speak of, could be especially vulnerable to this. Regardless, putting an algorithm in control of making editorial/curatorial decisions with no human oversight is clearly risky. (I’ve been skeptical of X’s algorithmic decisions ever since it pushed a Tweet to me saying that Elon Musk had died.)

Amazon “Just Walk Out” revealed to be 1000 Indians in an AI trench coat

Nutshell: Amazon touted the allegedly AI-powered tech powering its checkout-free stores, but it actually depended on a large team of human workers.

More: The Just Walk Out system was supposed to use sensors and cameras to detect what customers take off shelves and automatically charge them when they left the stores. In reality, the tech was so unreliable that The Information claims that 70% of purchases required the “Machine Learning data associates”—a team of 1000 people in India—to intervene. Amazon claimed they were just hired to train the machine learning models powering the tech, but Amazon is now dropping “Just Walk Out” in its stores.

Why you should care: We’re often told to “pay no attention to the man behind the curtain” when it comes to tech. Sometimes what’s behind the curtain is questionable data collection practices or environmental harm. Other times it’s literally a person, or people, like with the Cruise “self-driving” cars that required frequent remote intervention. Often these are hundreds or thousands of low-paid workers in developing countries, like with OpenAI using hundreds of low-paid workers in Kenya to label offensive content. It’s a reminder to always look behind the curtain of tech hype—not that it’s always easy.

Snapchat making design changes to decrease teen anxiety

Nutshell: Snapchat has turned off its “Solar System” feature by default after teens reported anxiety and splintered relationships.

More: The “Solar System” feature placed Snapchat+ users’ friends on a map of the solar system—being Mercury meant you were their closest Snap friend, Neptune… not so much. But this quantified ranking of Snapchat interactions was treated not as a somewhat arbitrary metric of how each person used the app, but as a measure of real-life relationships. Teens reported this “granular information about social standing” created drama and splintered relationships, and Snapchat responded by turning the feature off by default.

Why you should care: This is a small move on a small feature, but a constructive move overall. Small design changes can have big effects, and when teens are saying things like “a lot of kids my age have trouble differentiating best friends on Snapchat from actual best friends in real life,” any design changes that can help change this dynamic are a good thing. There’s been a lot of debate over the role of social media in teens’ mental health, but we don’t need to have answered every single research question to say that making design changes to platforms that improve kids’ health is a good thing. And while relying on platforms to make voluntary design changes isn’t the best strategy, in the absence of concrete regulatory action, it’s heartening to see progress, no matter how small. Whether platforms will take bigger measures that actually impact their bottom line, though, remains to be seen.

Extra Reckoning

Last week, we talked about the thankless roles of peer review and open-source software contribution. This week, I want to explore two other groups of volunteers that keep the Internet afloat: Reddit moderators and Wikipedia editors.

Wikipedia is overseen by the Wikimedia Foundation, but is self-governing and self-organizing. One of its five anchoring principles is “Wikipedia has no firm rules;” a linked policy page states “If a rule prevents you from improving or maintaining Wikipedia, ignore it.” Wikipedia editors have different privilege levels. Some are automatically granted depending on how long they’ve had an account, how many edits they’ve made, etc. More powerful privileges are granted by community consensus, elected Stewards, or the Arbitration Committee, a group of elected editors responsible for resolving community disputes. While there is some hierarchy to prevent anarchy, Wikipedia prides itself on being a largely flat, decentralized organization. It’s had its share of controversies, including a no-confidence vote that led a board member to step down in 2016, but for the most part has run fairly smoothly; even the no-confidence vote was an example of the governance structure (or lack thereof) working.

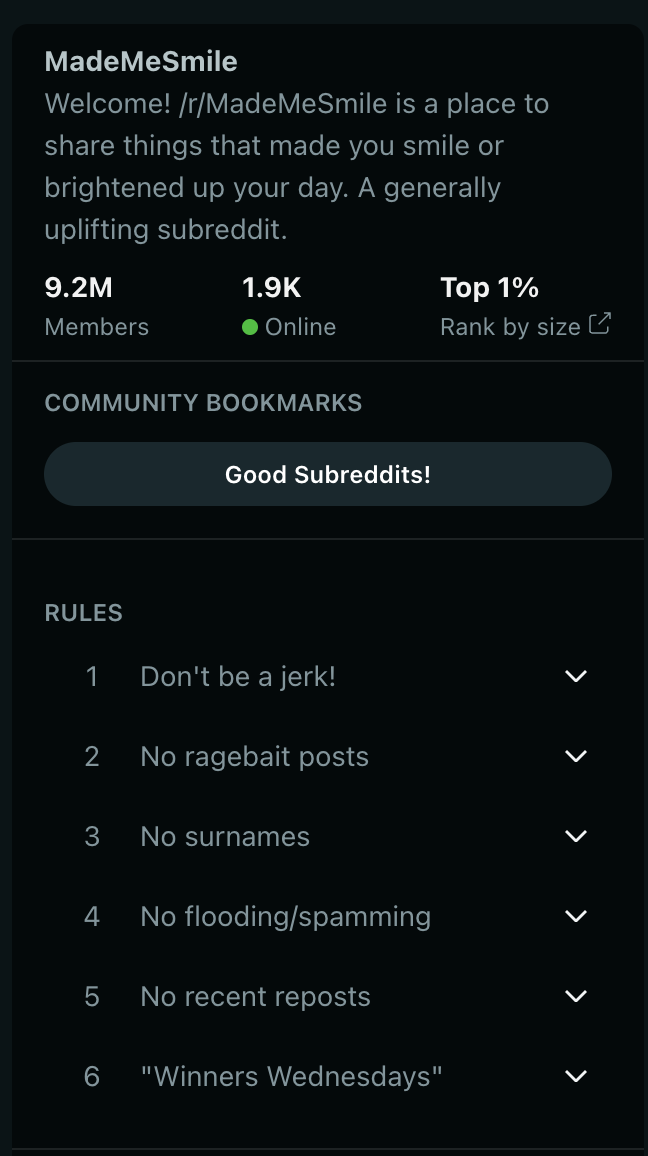

Reddit is a publicly traded company that bills itself as “the front page of the Internet.” It’s structured into “subreddits,” forums dedicated to specific topics: cute animal pictures, F1 memes, sourdough... you name it, there’s a subreddit for it. Reddit got a reputation for being a bit of a cesspool in the 2010s for its early laissez-faire approach to content moderation, but it’s updated its policies, removed abusive subreddits, and while it still has dark corners, it’s overall a much more pleasant place to be. Reddit sets ground rules for the platform: no harassment, no impersonation, label explicit content, etc. Within those broad boundaries, communities can set their own rules. Most use this to keep posts focused on their topic. Subreddits are run by moderators who enforce the rules, delete violating posts, and have the power to ban users from the subreddit.

This system generally keeps Reddit running smoothly… until it doesn’t. The site’s structure means that individual moderators have complete power over their subreddits, and Reddit has a propensity to attract, shall we say, nonconformist or anti-authority figures who have been known to make their voices heard, like when a Reddit employee who helped run the AskMeAnything subreddit was fired. Almost 300 subreddits set themselves to private in response, keeping other users from seeing the pages and triggering an apology from the CEO. Something similar happened when Reddit announced they were going to start charging for access to their API, which would shut down popular third-party apps and moderation tools. Over 8000 subreddits participated in that blackout, but Reddit, under new management, stuck it out, and then started threatening and removing moderators who continued to protest. The community won some exemptions to the API charges, like for accessibility-focused apps, but for the most part, the rebellion was crushed. This could happen because ultimately, Reddit is a centralized platform; though there’s a veneer of community decentralization, the board and CEO can do pretty much whatever they want. What they can’t do, though, is placate the people who are still angry about what Reddit did and how it responded.

Wikipedia is more immune to widespread revolts. Because it’s more decentralized, Wikipedia is less dependent on any individual contributor. If someone is burnt out or decides they don’t want to edit anymore for whatever reason, someone else can step in. No one owns any individual article like people do a subreddit or software repository, which means there’s no corresponding sense of ownership over individual pieces of the community. The only thing that could bring Wikipedia down is a full-scale rebellion… or a slow erosion of the editor community. Editor numbers have been flat for about a decade, with around 33,000 registered accounts making at least five edits in any given month.

What Wikipedia needs to worry about is not how to avoid angering its volunteer community, but how to make volunteering a more exciting and gratifying thing. Unlike peer review, I don’t think this should involve compensation; editing Wikipedia isn’t an expected part of any industry or job. But learning how to edit on Wikipedia isn’t always the easiest process, and the article “Criticism of Wikipedia” has a whole section about aspects of the community that could be unwelcoming. There’s also technical hurdles; I tried to create an account to edit a page over the course of writing this, only to be informed that my IP address has been blocked for some unknown reason.

But Wikipedia has a large pool of potential editors: women. Only 10-20% of Wikipedia editors are female. The Wikimedia Foundation is well aware of this, but efforts to get more women editors haven’t succeeded. Meanwhile, gender bias is a problem in its pages that initiatives like Women in Red are trying to correct, but still only 19% of biographies on Wikipedia are about women. Getting more women and gender-diverse people to edit Wikipedia would help shore up its editor numbers and, hopefully, make Wikipedia a better place.

Meanwhile, I wonder if a more decentralized system would help peer review. Rather than the “hub-and-spoke” system that most journals use where an editor reaches out to individual peer reviewers to ask them to contribute, perhaps a more decentralized system would help: papers could go into a pool and then volunteer reviewers, perhaps organized by expertise, select papers that interest them. This is similar to the “bidding” system some conferences use. But while conference reviewers are incentivized to volunteer for conference reviewing because they can say they were on the “Program Committee,” there would have to be some sort of incentive: either compensation, or a number of required reviews completed before being able to submit a paper to a journal. Carrot, or stick, or some combination thereof. There are lots of potential issues with this system (how to make sure reviews are completed by people who are actually experts in the field among them) but if it could lead to faster, more accurate peer reviews, I think it’s worth exploring.

I Reckon…

🎉⛷️🙈😞😥🚒🧃☸️💾🥌🐼🙊

(translation: that getting trapped in the emoji keyboard is a valid reason for a paper extension, right?)

Although the error rate needs to be put into perspective with the battlefield policy that authorizes 15-20 civilian casualties per target.

I apologize for the lack of alt text in this week’s newsletter. Substack appears to have a bug that corrupted photos when I tried to add alt text.

Thumbnail generated by DALL-E 3 via ChatGPT with the prompt “Give me an abstract textured painting of lavender in shades of lavender.”